Justice in a Patient Bill of Rights

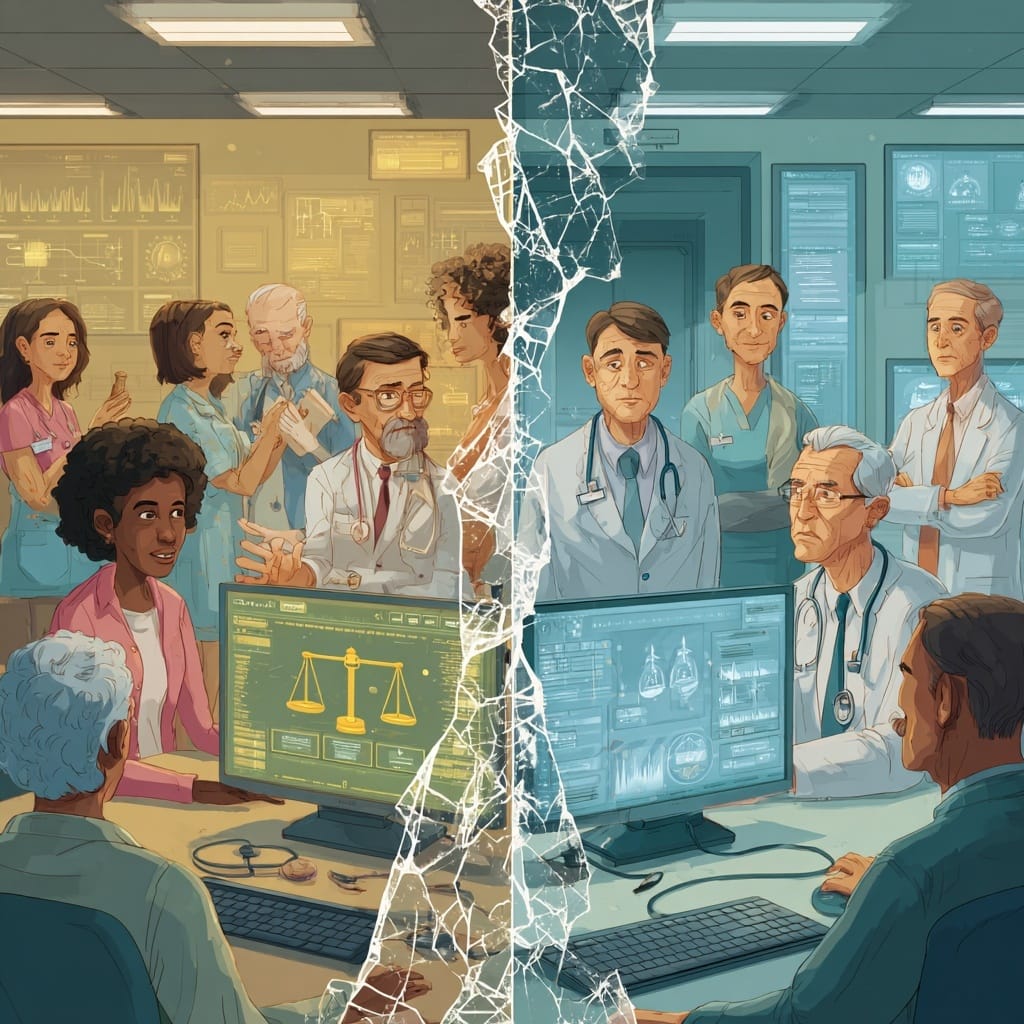

Justice in healthcare AI demands that we actively design systems to close, not widen, existing health disparities. This means confronting algorithmic bias head-on, ensuring diverse representation in data and development teams, and implementing robust equity frameworks throughout the AI lifecycle.

Ensuring Fair Distribution of AI's Promise and the Potential for Peril

The Bottom Line Up Front: Justice in healthcare AI demands that we actively design systems to close, not widen, existing health disparities. This means confronting algorithmic bias head-on, ensuring diverse representation in data and development teams, and implementing robust equity frameworks throughout the AI lifecycle.

If Beneficence asks "Does this AI help patients?" and Respect for Persons asks "Does this AI honor patient autonomy?", then Justice asks the hardest question of all: "Does this AI make healthcare more fair for everyone?"

The principle of Justice in healthcare traditionally focuses on the fair distribution of benefits, risks, and resources. In the AI era, this principle takes on profound new urgency. AI has the power to either dramatically reduce health disparities or catastrophically amplify them, and the choice is entirely in our hands as healthcare and AI professionals.

The Stark Reality: AI's Bias Problem is Our Problem

The evidence is mounting that current AI systems are failing the justice test. A landmark 2024 systematic review published in The Journal of Racial and Ethnic Health Disparities revealed that AI algorithms consistently exhibit significant racial and ethnic bias across multiple healthcare domains, with Black patients considerably sicker than White patients at equivalent risk scores, creating a need to increase Black patients receiving additional help from 17.7 to 46.5%.

Consider these troubling examples from recent research:

Diagnostic Imaging: Dermatological AI systems may misdiagnose skin conditions in individuals with darker skin tones due to underrepresentation in training datasets, potentially missing critical melanomas in the very populations that experience the highest mortality rates from delayed diagnosis. We've all heard this, but we could be doing better at using this as a reminder in our AI projects.

Risk Prediction: The widely-cited Optum algorithm, used by millions of patients, demonstrated that at a given risk score, Black patients are considerably sicker than White patients, as evidenced by signs of uncontrolled illnesses. The algorithm's reliance on healthcare costs as a proxy for health needs systematically underestimated Black patients' care needs because historical spending disparities meant less money was spent caring for them.

Language and Cultural Barriers: AI models used in primary care settings misclassified 20% of patients who preferred to use Spanish as preferring English due to imbalanced training data, potentially creating dangerous communication breakdowns in care.

The Root Causes: Where Justice Goes Wrong

Understanding why AI perpetuates injustice is crucial for fixing it. The problem isn't just technical - it's systemic and reflects existing issues of justice that are embedded in the current healthcare ecosystem.

Data Representation Crisis

Vulnerable populations remain underrepresented in healthcare data, with most datasets used to train AI algorithms not being diverse, disaggregated, and interoperable. When training data predominantly reflects the experiences of advantaged populations, AI systems learn to optimize care for those groups while potentially harming others.

Developer Diversity Gap

Only 5 percent of active physicians in 2018 identified as Black, and about 6 percent identified as Hispanic or Latino, with the percentage of underrepresented developers being even lower. This lack of diversity in development teams means fewer people who may understand the implications of potential biases that could harm marginalized communities.

Geographic Bias

Most U.S. patient data comes from three states: California, Massachusetts, and New York, creating algorithms that may not accurately serve patients in rural areas, the South, or other underrepresented regions where healthcare access and delivery patterns differ significantly.

Historical Bias Amplification

Datasets implicitly carry bias regardless of whether social category information is included because datasets exist in the context of an unfair world. AI systems trained on historical data inevitably learn and perpetuate past discrimination patterns unless we actively intervene.

The Justice Imperative: Building Equity by Design

The good news? Healthcare leaders and researchers are developing comprehensive frameworks to embed justice throughout the AI lifecycle. The emerging consensus is clear: equity cannot be an afterthought. It must be foundational, just like patient safety.

The EDAI Framework

The newly developed EDAI (Equity, Diversity, and Inclusion in AI) framework provides comprehensive, actionable guidelines for integrating EDI principles into AI development and deployment. This framework addresses gaps at the individual, organizational, and systemic levels, offering healthcare systems a roadmap for justice-centered AI implementation.

Health Equity Lifecycle Approaches

The HEAAL (Health Equity Across the AI Lifecycle) framework focuses on applying health equity assessments at each stage of AI development, from data collection to real-world deployment, encouraging iterative assessments to identify and correct potential biases.

Federal Leadership

An Executive Order on AI has catalyzed unprecedented action. By December 2023, 28 healthcare providers and payers voluntarily committed to the safe, secure, and trustworthy use and purchase of AI in healthcare, demonstrating growing institutional commitment to equity principles.

Practical Steps for Healthcare Leaders

For Individual Clinicians:

- Maintain "algorithmic vigilance"—question AI recommendations that seem inconsistent with patient presentations, especially for underrepresented groups

- Advocate for diverse representation in any AI evaluation committees at your institution

- Request demographic performance data for any AI tools you're asked to use

For Healthcare Systems:.

- Conduct regular equity audits of AI systems to identify and address any exclusion of populations.

- Ensure datasets include diverse demographic groups by actively recruiting underrepresented populations in data collection efforts.

- Implement continuous monitoring with appropriate metrics and standardized bias reporting requirements.

For AI Developers and Vendors:

- Prioritize diversity in AI development teams, including developers representing different racial and ethnic backgrounds, partnering with community organizations, and centering the perspectives of people with lived experience. I feel this is where having patient advisory councils can make a significant difference.

- Utilize technical approaches to mitigating discrimination and bias in algorithm development, such as the open-source AI Fairness Project.

- Share code and algorithms that can synthesize underrepresented data to address bias, following open science practices.

The Path Forward: Justice as Innovation Driver

Here's what gives me hope: addressing AI bias isn't just ethically necessary - it's competitively advantageous. Healthcare systems that proactively tackle equity challenges will build stronger patient trust, reduce liability risks, and deliver better outcomes across all populations.

Fairness and justice principles ensure that AI-driven tools do not create or exacerbate inequalities but rather promote equitable access to healthcare services. When we get this right, AI becomes a powerful tool for reducing the very disparities that have plagued healthcare for generations.

The principle of Justice demands that we move beyond hoping AI will be fair to ensuring it must be fair. This requires vigilance, investment, and the courage to reject AI systems that fail to serve all patients equitably.

The Question for Every Healthcare Leader: Will your AI initiatives advance justice, or will they perpetuate the inequities we've sworn to heal?

In Part 4 of this series, we'll explore how these three principles—Beneficence, Respect for Persons, and Justice—work together to create a comprehensive framework for ethical AI implementation that every healthcare organization can adopt.

What specific steps is your organization taking to ensure AI advances health equity? How do you evaluate whether an AI tool meets the Justice standard? Share your experiences and challenges in the comments below.

References

- Hussain, S.A., Bresnahan, M., & Zhuang, J. (2025). The bias algorithm: how AI in healthcare exacerbates ethnic and racial disparities - a scoping review. Ethnicity & Health, 30(2), 197-214.

- Stone, E. (2024). AI in Healthcare: Counteracting Algorithmic Bias. Deerfield: Journal of the CAS Writing Program.

- Payton, F.C., Hurd, T.C., & Hood, D.B. (2024). AI Algorithms Used in Healthcare Can Perpetuate Bias. Rutgers University-Newark News.

- Obermeyer, Z., Powers, B., Vogeli, C., & Mullainathan, S. (2019). Dissecting racial bias in an algorithm used to manage the health of populations. Science, 366(6464), 447-453.

- Chen, F., Wang, L., Hong, J., Jiang, J., & Zhou, L. (2024). Unmasking bias in artificial intelligence: a systematic review of bias detection and mitigation strategies in electronic health record-based models. Journal of the American Medical Informatics Association, 31(5), 1172-1183.

- Dankwa-Mullan, I. (2024). Health Equity and Ethical Considerations in Using Artificial Intelligence in Public Health and Medicine. Preventing Chronic Disease, 21:240245.

- Colon-Rodriguez, C.J. (2024). Evolving Perspectives on Healthcare Algorithmic and Artificial Intelligence Bias. Office of Minority Health, U.S. Department of Health and Human Services.

- Abbasgholizadeh Rahimi, S., et al. (2024). EDAI Framework for Integrating Equity, Diversity, and Inclusion Throughout the Lifecycle of AI to Improve Health and Oral Health Care. Journal of Medical Internet Research, 26:e63356.

- Green, B.L., Murphy, A., & Robinson, E. (2024). Accelerating health disparities research with artificial intelligence. Frontiers in Digital Health, 6:1330160.

- Crawford, M., Simmons, M., & Turner Lee, N. (2025). Health and AI: Advancing responsible and ethical AI for all communities. Brookings Institution.